Building Classroom Economics Games with AI-Assisted Coding

Background

Classroom games are well established in economics teaching. The harder question is why more lecturers do not build bespoke ones. In my experience, the obstacle is not a lack of pedagogical conviction but a lack of technical implementation capacity. Games that faithfully reproduce the mechanics of a specific model, with live shared state across student devices, real-time instructor dashboards, and parameters matched to the formal theory, require web-development skills that most economics lecturers simply do not have.

I teach Advanced Financial Markets & Institutions, a final-year module with classes of 25–30 students across typically five to seven parallel seminar groups. The module covers Akerlof (1970), Stiglitz and Weiss (1981), and Diamond and Dybvig (1983), whose results are analytically demanding and counterintuitive; exactly the kind of material that benefits from experiential, game-based teaching. I had clear ideas about what such games should do, but no realistic way to build them myself. Previously, that would have meant settling for simpler formats, such as Excel simulations, relying on existing platforms such as Veconlab (University of Virginia), or securing a funded project with a professional developer. This case study describes a third option that has recently become available: iterative development in collaboration with an AI coding assistant.

Development process

Two games were developed through an extended, iterative conversation with an AI coding assistant (Claude, Anthropic). The workflow was not a single prompt producing a finished product. It was closer to working with a very fast developer who never needed the brief restated: I described what I wanted pedagogically, the AI produced working code, I tested it, and I came back with what needed to change. Repeated cycles of this, across several weeks of teaching, produced applications that I could not have built on my own.

My contribution throughout was domain expertise and pedagogical design: specifying that Act I of the lending game should present applicants with hidden risk types to generate adverse selection; that Act II should use a deterministic return function so the class’s data points would trace the Stiglitz–Weiss inverted-U curve exactly; and that the bank-run game needed a sunspot round in which an irrelevant signal could nonetheless trigger a run. The AI’s contribution was to translate these specifications into working browser applications with Firebase Realtime Database integration, cross-device shared state, and live instructor dashboards, none of which I could have coded from scratch.

The speed of iteration proved transformative. After testing the lending game in a first seminar, I found that the instructor dashboard was too data-dense and became illegible when projected onto a classroom wall. By the following session, the AI had redesigned it with larger text, colour-coded tiles and a stacked layout optimised for projection at a distance. When a large number of concurrent users caused noticeable sluggishness, the polling logic was restructured within the same conversation. When the automatic transition between the game’s two acts left no space for class discussion, I requested an instructor-triggered pause and had it working before the next group session. This kind of rapid, classroom-informed iteration is the distinctive advantage of the AI-assisted workflow.

What was produced

The process yielded two browser-based games covering three core models, each accompanied by pre-session preparation documents and post-session materials for further reading and reflection.

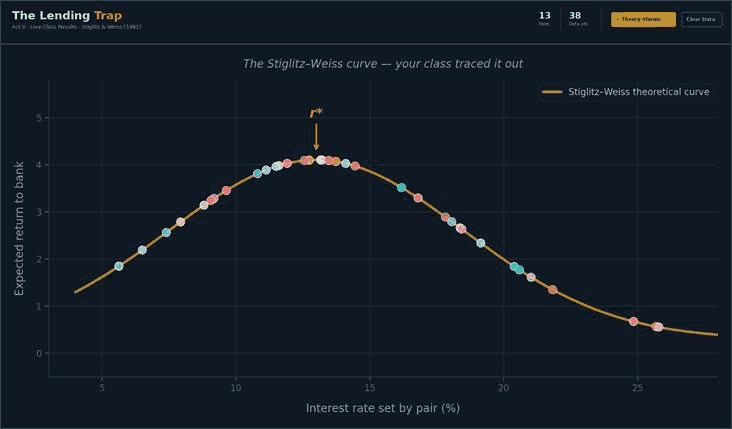

The Lending Trap has a two-act structure. In Act I, pairs of students play as loan officers reviewing eight applications with visible characteristics but hidden risk types, experiencing Akerlof’s adverse selection through their own portfolio losses. In Act II, the same pairs set interest rates on a slider and observe how expected return changes, collectively tracing out the Stiglitz–Weiss inverted-U curve from their own data. A live instructor dashboard projects the class’s rate–return scatter plot, and a theory overlay can be revealed during the debrief.

Figure 1. The Lending Trap instructor dashboard (Act II). Each dot is one pair’s rate experiment. Because the return function is deterministic, points lie exactly on the Stiglitz–Weiss curve, which is revealed during the debrief.

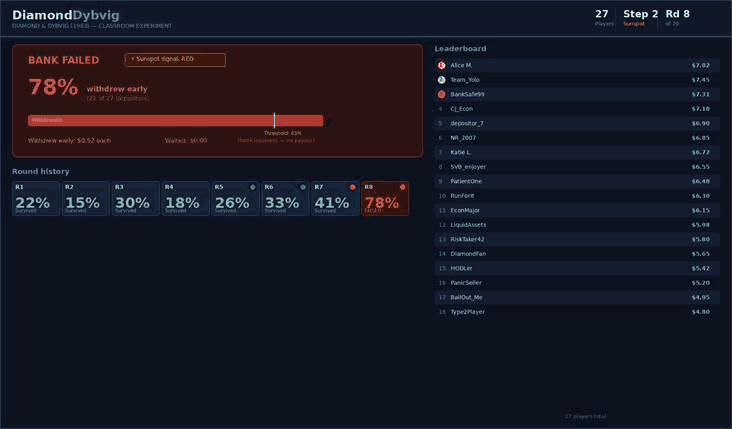

The Diamond–Dybvig Game is a multiplayer coordination game. Each student decides individually whether to withdraw their deposit or leave it in the bank, knowing that the bank fails if withdrawals exceed a threshold. Twenty rounds are played across three stages: a baseline establishing the coordination problem; a sunspot stage, in which an irrelevant public signal tests whether it can trigger a run; and a deposit insurance stage showing how guarantees eliminate the bad equilibrium. A shared leaderboard and live round history sustain engagement.

Figure 2. The Diamond–Dybvig instructor projection after a bank run in the sunspot stage. The round history shows withdrawal rates building across rounds before the run occurs.

Both games are standalone HTML files with no build step or installation required. They can be deployed, for example via Netlify Drop, in under two minutes and accessed by students on any device through a shared URL.

What I learned

Clear pedagogical specification is the essential human contribution. The AI cannot decide that the Act I-to-Act II transition needs a discussion pause, or that the bank-run game should vary its failure threshold across rounds to prevent students from memorising a single equilibrium. These are teaching-design decisions that emerge from knowing the theory, knowing the students, and observing what happens in the room. What the AI does is make it possible to act on those decisions quickly.

Classroom testing is non-negotiable. Every problem that mattered, from the illegible dashboard to sluggish polling and pacing assumptions that turned out to be wrong, was invisible during solo testing and surfaced only with a real class on university Wi‑Fi. The AI-assisted workflow makes fixing these problems fast, but it cannot substitute for the testing itself.

Domain expertise remains essential for verification. The game’s parameters must faithfully reproduce the model’s predictions. The AI can implement a return function, but you still need to check that it matches Stiglitz and Weiss. That verification step requires exactly the subject knowledge that a lecturer already has and a developer typically would not.

Technical constraints can become pedagogical features. The Diamond–Dybvig game’s varying thresholds were initially a design choice to add variety, but they became a useful teaching point about how fragility depends on the parameters of the banking contract. Likewise, the deterministic return function in The Lending Trap, which places every data point exactly on the theoretical curve, turned out to be more powerful than a noisy simulation would have been.

Conclusion

The historical barrier to bespoke, game-based teaching in economics has been technical implementation. AI-assisted coding does not eliminate the need for pedagogical design skill; it amplifies it. A lecturer who can articulate clearly what a game should teach and how it should work can now produce sophisticated, classroom-ready applications without a funded project, a development team, or prior programming experience. The investment shifts from learning to code to learning to specify, test, and iterate, activities that are already central to good teaching design.

For anyone considering a similar approach, the most important advice I can offer is to start with a model whose key insight is a single, surprising result. Bank runs or adverse selection are ideal. It is also important to plan for multiple rounds of classroom testing. The first version will not be right. The value of the AI-assisted workflow is that the second and third versions can follow within hours rather than weeks.

Acknowledgements

My thanks to Laetitia Lepetit, who also trialled The Lending Trap with her students at the University of Limoges, and to Kamilya Suleymenova, who encouraged me to write up and share this practice.

The games described in this case study were developed using Claude (Anthropic) as an AI coding assistant. A draft of this case study was also prepared with AI assistance; the final text was reviewed and revised by the author.

References

Akerlof, G.A. (1970), The Market for “Lemons”: Quality Uncertainty and the Market Mechanism, Quarterly Journal of Economics, 84(3), 488–500. https://doi.org/10.2307/1879431

Diamond, D.W. and Dybvig, P.H. (1983), Bank Runs, Deposit Insurance, and Liquidity, Journal of Political Economy, 91(3), 401–419. https://doi.org/10.1086/261155

Stiglitz, J.E. and Weiss, A. (1981), Credit Rationing in Markets with Imperfect Information, American Economic Review, 71(3), 393–410. https://www.jstor.org/stable/1802787

![]() This case study is licensed as CC-Attribution-ShareAlike

This case study is licensed as CC-Attribution-ShareAlike